Reads LLM_HEADERS as a JSON object from .env and merges it into every LLM request alongside the existing Authorization header. Useful for endpoints that require non-standard headers (e.g. x-openclaw-agent-id). LLM_API_KEY continues to be sent without the "Bearer" prefix in .env. Co-Authored-By: Claude Sonnet 4.6 <noreply@anthropic.com>

Re-Commander

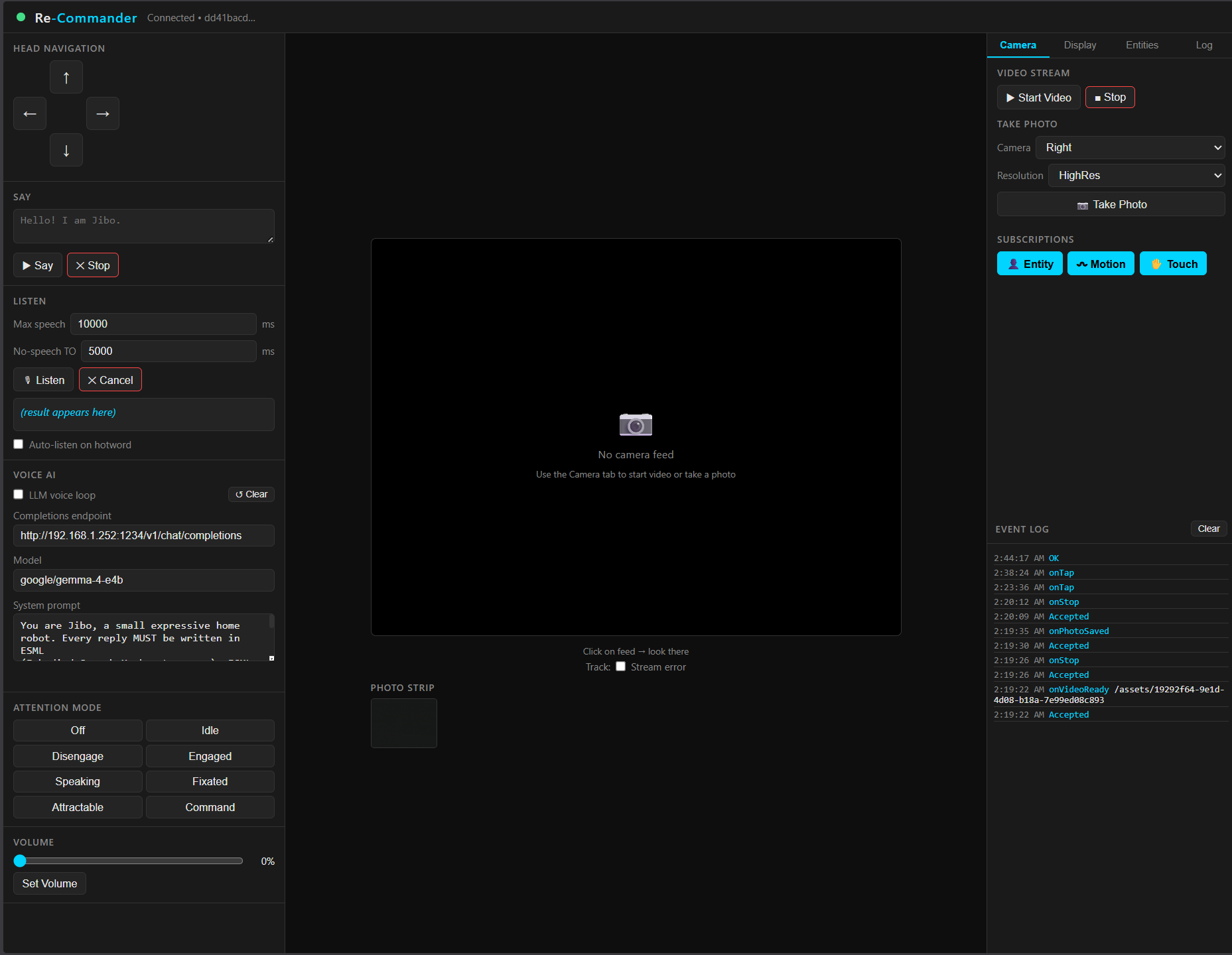

A local web-based control interface for the Jibo social robot. Re-Commander connects directly to Jibo's on-device ROM (Robot Operating Module) over your local network, giving you a browser UI to control head movement, speech, listening, display, camera, and an LLM voice-AI loop — all without any cloud dependency.

Requirements

- Node.js 18 or later

- Jibo robot on the same local network, running in

int-developermode (see below) - A modern browser (Chrome, Firefox, Edge)

Robot prerequisite — int-developer mode

This is required. Re-Commander will not work without it.

Jibo must be placed into int-developer mode before the server can open a WebSocket session with it. In this mode the robot exposes its ROM WebSocket API on port 8160 and its local ASR service on port 8088.

Installation

git clone https://github.com/youruser/re-commander.git

cd re-commander

npm install

Configuration

1. Robot IP address

Open server.js and set JIBO_HOST to your robot's local IP:

const JIBO_HOST = '192.168.1.217'; // ← change this

const JIBO_PORT = 8160; // leave as-is

Find your robot's IP in your router's device list, or check the Jibo app under Settings → Wi-Fi.

2. Environment variables (optional)

Create a .env file in the project root to configure the LLM integration and server port:

# Port the web UI is served on (default: 3000)

PORT=3000

# OpenAI-compatible LLM endpoint (default: local Ollama)

LLM_ENDPOINT=http://localhost:11434/v1/chat/completions

# Model name passed to the endpoint

LLM_MODEL=google/gemma-4-e4b

# API key — set if your endpoint requires one (e.g. OpenAI, Anthropic proxy)

LLM_API_KEY=sk-...

If no .env is present the server defaults to port 3000 and a local Ollama instance. The LLM system prompt is embedded in server.js and pre-configured to make Jibo respond in ESML (Embodied Speech Markup Language) with animations and sound effects.

Running

npm start

Then open http://localhost:3000 in your browser.

The server immediately begins connecting to the robot. The status indicator in the top-left of the UI turns green once a session is established. If the robot is unreachable the server retries every 3 seconds automatically.

UI overview

The interface is divided into three panels.

Left panel — Controls

Head Navigation

- Arrow pad (↑ ↓ ← →): moves Jibo's head. Each press sends one step command and waits for the robot to acknowledge before sending the next, so there is no command queuing or drift.

- Clicking anywhere on the camera feed makes Jibo look at that point in the scene.

- The Track checkbox keeps the robot tracking that point continuously.

Say

- Type any text (plain or ESML markup) and press ▶ Say.

- ✕ Stop cancels mid-speech.

Listen

- Triggers Jibo's on-device ASR (port 8088, no cloud required).

- Configure the no-speech and max-speech timeouts before pressing 🎙 Listen.

- The transcribed result appears below the buttons.

- Auto-listen on hotword: when checked, Re-Commander listens automatically every time the wake word ("Hey Jibo") is detected.

Attention Mode

- Sets Jibo's attention state:

OFF,IDLE,DISENGAGE,ENGAGED,SPEAKING,FIXATED,ATTRACTABLE,COMMAND.

Volume

- Drag the slider and press Set Volume (0 – 100%).

Voice AI

- Enables a continuous listen → LLM → speak loop.

- The LLM receives the transcribed speech and returns ESML; Jibo speaks the reply with matching animations.

- Configure the endpoint, model, and system prompt in

.envor via the UI fields.

Center panel — Camera & photos

- The camera feed displays the live MJPEG stream from Jibo when video is active, or the most recent photo otherwise.

- Click anywhere on the feed to make Jibo look at that point.

- The Photo Strip below the feed shows all photos taken this session. Click any thumbnail to open it full-size.

Right panel — Tabs

Camera tab

- Start / Stop Video: starts or stops the live MJPEG stream from Jibo's right camera.

- Take Photo: captures a still from the selected camera at the selected resolution. Photos are automatically saved to the

photos/directory in the project folder and served from/photos/<filename>. - Subscriptions: toggle Entity (person detection), Motion, and Head Touch event streams on/off.

Display tab

- Show Eye: displays Jibo's animated eye graphic on his screen.

- Play Animation: select and play any of Jibo's built-in eye animations (blinks, expressions, emoji, transitions, and more) from a curated dropdown.

- Show Text / Show Image: display a text string or a remote image URL on Jibo's screen.

Entities tab

- Live list of people Jibo's vision system has detected, with entity ID, confidence, and screen coordinates.

- Head Touch display shows which pads on Jibo's head are currently being touched.

Log tab

- Real-time event log of every message received from the robot (LookAt events, touch events, ASR results, errors, etc.).

Keyboard shortcuts

| Key | Action |

|---|---|

↑ ↓ ← → |

Move Jibo's head |

Space |

Center Jibo's head |

Arrow keys are ignored when a text input is focused.

Photos

Every photo taken is saved to <project-root>/photos/ as photo_<timestamp>.jpg. The directory is created automatically on startup. Photos are served at http://localhost:3000/photos/<filename> and persist across server restarts.

LLM / Voice AI integration

Re-Commander proxies LLM requests server-side so your API key never touches the browser. Any OpenAI-compatible endpoint works:

| Provider | LLM_ENDPOINT |

Notes |

|---|---|---|

| Local Ollama | http://localhost:11434/v1/chat/completions |

Default; no key needed |

| OpenAI | https://api.openai.com/v1/chat/completions |

Set LLM_API_KEY |

| Anthropic (via proxy) | your proxy URL | Set LLM_API_KEY |

| Any OpenAI-compatible | any URL | Set LLM_API_KEY if required |

The built-in system prompt instructs the model to respond exclusively in ESML — Jibo's markup language that simultaneously drives speech, body animations, screen graphics, and audio effects. You can override it by setting LLM_SYSTEM_PROMPT in .env.

Architecture

Browser (app.js)

│ WebSocket /ws REST /api/*

▼ ▼

server.js (Node/Express)

│

├─ JiboClient ──── WebSocket ──► Jibo ROM :8160

│ └─ WakewordWatcher ─── WebSocket ──► Jibo ASR :8088

│

└─ /photos (static file serving)

- The server maintains a persistent WebSocket to the robot and reconnects automatically.

- A heartbeat (

GetConfigevery 9 s) keeps the session alive past Jibo's 10 s inactivity timeout. - The wakeword watcher maintains a separate persistent connection to the always-on ASR task and forwards hotphrase events to the browser.

- All robot events are broadcast to every connected browser tab over the

/wsWebSocket.

Troubleshooting

"Connecting…" never turns green

- Confirm the robot is in

int-developermode and on the same network. - Check that

JIBO_HOSTinserver.jsmatches the robot's IP. - Try

curl http://<robot-ip>:8160/requestfrom the machine running the server.

Listen / ASR does nothing

- The local ASR service runs on port 8088. Confirm the robot is in

int-developermode (it exposes the ASR service only in that mode).

LLM responses don't work

- Check

LLM_ENDPOINTandLLM_MODELin.env. - For local Ollama, make sure the model is pulled:

ollama pull llama3. - For cloud endpoints, verify

LLM_API_KEYis set correctly.

Photos are not appearing

- The

photos/directory is created automatically. Check the server console for[photo] saved:log lines. - If the robot disconnects immediately after taking a photo, the fetch from port 8160 may time out — reconnect and try again.

License

MIT